Decentralizing pretaining: Exploring distributed networks through SN9’s new whitepaper

Decentralized networks hold inherent advantages over centralized competitors, but these must be proven in practice. Our first whitepaper reveals how we're delivering distributed pretraining at scale

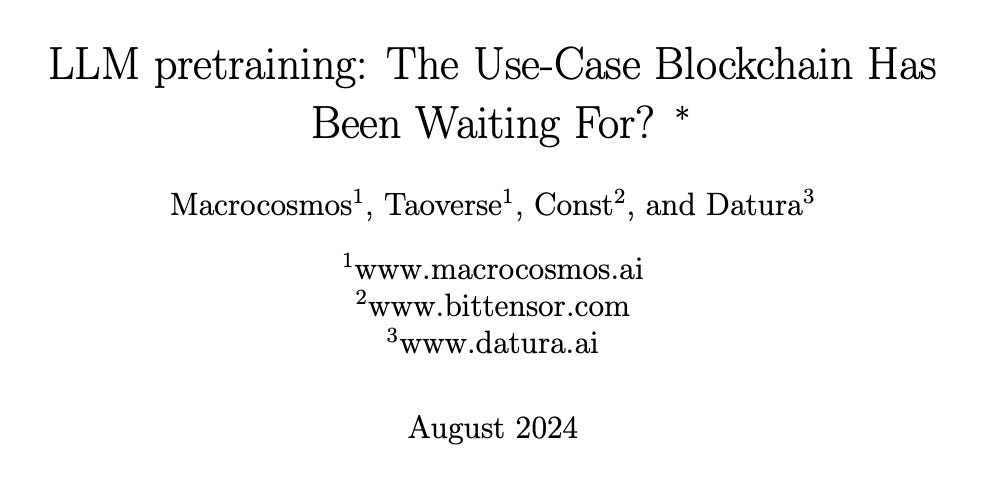

Pretraining is the most computationally intense stage of AI model development. Established AI firms are spending huge amounts to assemble the computing power they need to pre-train models. Meta’s Llama 3.1 8B took 1.46 million H100 GPU hours to train, while the larger 405B model took a staggering 30.84 million H100 GPU hours.

Pretraining incurs very high costs due to its enormous energy consumption and reliance on specialized hardware and infrastructure. The computing power required for a state-of-the-art LLM doubles every 10 months. While some of this increasing compute is off-set by greater efficiency (due to both modern techniques and Moore’s Law), it still translates into high costs for model development.

As the cost of model training increases exponentially, only the very largest companies can access and pay for pretraining and fine-tuning on the scale needed for SOTA models. SN9, the pretraining subnet on Bittensor, offers an alternative: a unique set of incentives for pretraining models which incentivise miners to produce high quality models, given a set of constraints such as model size.

Over the past few months, our SN9 engineers have documented their progress in honing pretraining incentivisation, resulting in the publication of our first technical whitepaper. The paper presents SN9’s design, refinement, and results, revealing how our team has iterated their way towards a distributed alternative to one of machine learning’s most computationally intensive challenges.

Within Bittensor, pretraining is a logical use case for the ecosystem, as these pretrained models can then power other intelligence-based subnets. It is a pillar of the Bittensor ecosystem where marginal improvements to pretraining can have significant downstream benefits for other Bittensor-based projects.

Pretraining on distributed networks therefore offers one of the clearest use cases for developing a decentralized alternative. Building on Bittensor allows us to subsidize that training cost while providing a platform for skilled practitioners to monetize their expertise and bring value to the wider ecosystem in the form of open models. An effective pretraining subnet on Bittensor can deliver SOTA intelligence while driving down cost, providing the foundation that other subnets and model builders can fine-tune for specific use cases.

Appropriate architecture

Decentralized systems for training SOTA models present unique challenges. To reap the benefits, our design choices must align Subnet 9’s goals with the behaviors of its participants. Our three guiding questions are:

How do we identify and compensate individual contributions?

How do we incentivise continuous improvement?

How do we mitigate risks?

Each of these questions ultimately leads us to maximize the competitive element of the subnet architecture, while reducing the risk posed by bad actors. SN9’s design establishes ownership through a UID. Miners upload their pretrained models onto Hugging Face. A winner-takes-all reward function compensates the top-performing model with most (95% or more) of the miners’ emissions on the subnet, while every other model receives almost nothing.

In order to be eligible for reward, new models have to cross the ‘Epsilon Threshold’; a minimum improvement on the loss function required to beat an earlier model on the leaderboard, which at the time of writing is set to 0.5%. This threshold is high enough to ensure stability on the subnet (so that minuscule changes in model performance do not constantly change the top-performing model), ensuring that a new model has significantly improved over the existing best-performing model.

Yet 0.5% is also low enough to motivate miners to beat the incumbent top model, incentivising a continuous stream of increasingly powerful models. This design drives relentless, competitive, and meaningful improvement.

Once a better model is added to the subnet, it is publicly available for every other miner to use as a starting point for further training. This creates the incentive for teams of miners to constantly build upon the current best performing model, delivering long-term performance improvement to the subnet while minimizing the risk of one miner locking in an insurmountable advantage. It also lowers the barrier to entry, as new entrants are not required to train a model from scratch.

Confronting collusion and cabals

Decentralized networks hold huge promise, but they also present unique risk. Every subnet on Bittensor faces the threat of cabals, and the specific nature of cabalistic risk depends on the idiosyncrasies of the subnet’s design. That’s why each subnet must develop its own approach to prevention.

On Subnet 9, we have designed the subnet to minimize the potential for collusion between teams of miners. The winner-takes-all compensation model incentivizes competition and reduces the risk of teams colluding. There is no economy of scale for individual actors who run multiple miners, as only a single model can win at once. Miners who are at the top of the leaderboard get no further benefit from working with other teams when they are already receiving all the rewards of the competition. Only by improving upon the best model can a second team receive rewards.

Another perennial risk for subnets is that individual miners will attempt to exploit the incentive mechanism. SN9’s ‘Epsilon Threshold’ only rewards valuable contributions, rather than random modifications to a model’s weights that only achieve minor improvements in performance.

With SN9, we are building the foundations of a platform which will underpin years, if not decades, of model development. To deliver that potential, we’ve carefully considered the security and ethical consequences of our work on Bittensor. In terms of security, we ensure that miners only upload weights rather than code, which limits the risk posed by rogue miners. To address concerns around data privacy, we made the design decision to only rely on free, publicly available data sources for model pretraining. Central to our approach to building the pretraining subnet is to share with the community as much performance data as possible through our dashboards. These dashboards allow us to share information such as the network loss in real time.

Since launch, we’ve continuously benchmarked performance on the pretraining subnet against comparable models. The results have confirmed our starting hypothesis: models trained within Bittensor have been able to match or out-perform models at a comparable model size. We’ll share results on our next post - and explore how decentralized pretraining could lead to even greater breakthroughs in future.